Introduction to Microsoft Azure Cognitive Service

Microsoft Cognitive Services (earlier known as Project Oxford) provides us the ability to build intelligent applications, just by writing a few lines of code. These applications or services are deployed major platforms like Windows, iOS, and Android. All the API’s are based on machine learning APIs and enables developers to easily add intelligent features – such as emotion and video detection; facial, speech and vision recognition; and speech and language understanding – into their applications.

For Microsoft Azure Certification list visit this link Microsoft Azure Certification List

I would recommend you to read the articles mentioned below-

In this article, I will walk through step-by-step of exploring FACE and EMOTION API’s of Microsoft Cognitive Services. Henceforth, this article will cover below three parts and I would suggest you to go through this article in the below given order only:-

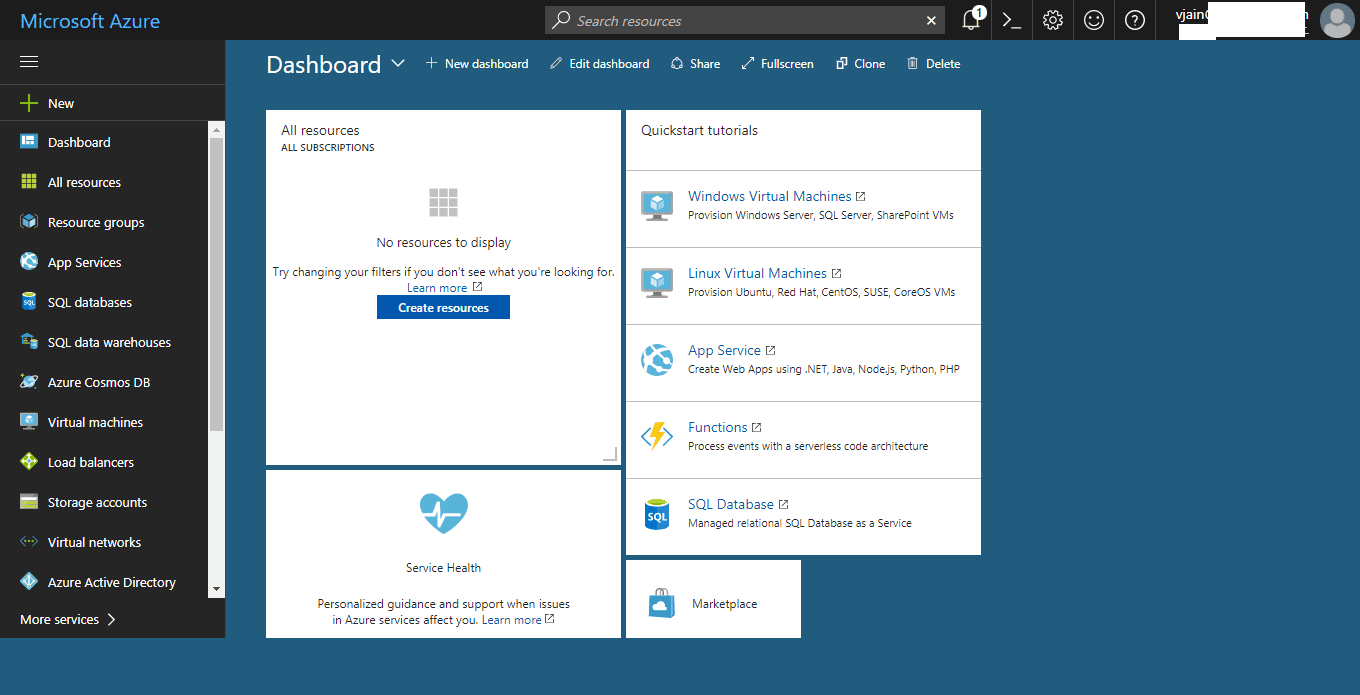

So, below are the prerequisites to work with Microsoft Cognitive Services API:-

Part 2

FACE API using Azure Cognitive Service

Face API, provided by Microsoft Cognitive Services, helps to detect, analyse and organize the faces in a given image. We can also tag faces in any given photo.

Step 2 – Once you click on “AI + Cognitive Services”, you will see list of API’s available in Cognitive services:-

Step 3 – Choose Face API to subscribe to Microsoft Cognitive Services Face API and proceed further with subscription steps. After clicking on FACE API, it will show the legal page, read it carefully and then click on Create.

Below are the generated Keys and Endpoint URL, in my case:-

<Window x:Class=”Face_Tutorial.MainWindow” xmlns=”http://schemas.microsoft.com/winfx/2006/xaml/presentation” xmlns:x=”http://schemas.microsoft.com/winfx/2006/xaml” Title=”MainWindow” Height=”700″ Width=”960″> <Grid x:Name=”BackPanel”> <Image x:Name=”FacePhoto” Stretch=”Uniform” Margin=”0,0,0,50″ MouseMove=”FacePhoto_MouseMove” /> <DockPanel DockPanel.Dock=”Bottom”> <Button x:Name=”BrowseButton” Width=”72″ Height=”20″ VerticalAlignment=”Bottom” HorizontalAlignment=”Left” Content=”Browse…” Click=”BrowseButton_Click” /> <StatusBar VerticalAlignment=”Bottom”> <StatusBarItem> <TextBlock Name=”faceDescriptionStatusBar” /> </StatusBarItem> </StatusBar> </DockPanel> </Grid> </Window> |

Once you will add these two dll’s, these will be shown in the solution as follows:-

private async void BrowseButton_Click(object sender, RoutedEventArgs e) { // Get the image file to scan from the user. var openDlg = new Microsoft.Win32.OpenFileDialog(); openDlg.Filter = “JPEG Image(*.jpg)|*.jpg”; bool? result = openDlg.ShowDialog(this); // Return if canceled. if (!(bool)result) { return; } // Display the image file. string filePath = openDlg.FileName; Uri fileUri = new Uri(filePath); BitmapImage bitmapSource = new BitmapImage(); bitmapSource.BeginInit(); bitmapSource.CacheOption = BitmapCacheOption.None; bitmapSource.UriSource = fileUri; bitmapSource.EndInit(); FacePhoto.Source = bitmapSource; // Detect any faces in the image. Title = “Detecting…”; faces = await UploadAndDetectFaces(filePath); Title = String.Format(“Detection Finished. {0} face(s) detected”, faces.Length); if(faces.Length > 0) { // Prepare to draw rectangles around the faces. DrawingVisual visual = new DrawingVisual(); DrawingContext drawingContext = visual.RenderOpen(); drawingContext.DrawImage(bitmapSource, new Rect(0, 0, bitmapSource.Width, bitmapSource.Height)); double dpi = bitmapSource.DpiX; resizeFactor = 96 / dpi; faceDescriptions = new String[faces.Length]; for (int i = 0; i < faces.Length; ++i) { Face face = faces[i]; // Draw a rectangle on the face. drawingContext.DrawRectangle( Brushes.Transparent, new Pen(Brushes.Red, 2), new Rect( face.FaceRectangle.Left * resizeFactor, face.FaceRectangle.Top * resizeFactor, face.FaceRectangle.Width * resizeFactor, face.FaceRectangle.Height * resizeFactor ) ); // Store the face description. faceDescriptions[i] = FaceDescription(face); } drawingContext.Close(); // Display the image with the rectangle around the face. RenderTargetBitmap faceWithRectBitmap = new RenderTargetBitmap( (int)(bitmapSource.PixelWidth * resizeFactor), (int)(bitmapSource.PixelHeight * resizeFactor), 96, 96, PixelFormats.Pbgra32); faceWithRectBitmap.Render(visual); FacePhoto.Source = faceWithRectBitmap; // Set the status bar text. faceDescriptionStatusBar.Text = “Place the mouse pointer over a face to see the face description.”; } } |

Add a new await function named UploadAndDetectFaces(), which accepts imageFilePath as an object parameter.

// Uploads the image file and calls Detect Faces. private async Task<Face[]> UploadAndDetectFaces(string imageFilePath) { // The list of Face attributes to return. IEnumerable<FaceAttributeType> faceAttributes = new FaceAttributeType[] { FaceAttributeType.Gender, FaceAttributeType.Age, FaceAttributeType.Smile, FaceAttributeType.Emotion, FaceAttributeType.Glasses, FaceAttributeType.Hair }; // Call the Face API. try { using (Stream imageFileStream = File.OpenRead(imageFilePath)) { Face[] faces = await faceServiceClient.DetectAsync(imageFileStream, returnFaceId: true, returnFaceLandmarks: false, returnFaceAttributes: faceAttributes); return faces; } } // Catch and display Face API errors. catch (FaceAPIException f) { MessageBox.Show(f.ErrorMessage, f.ErrorCode); return new Face[0]; } // Catch and display all other errors. catch (Exception e) { MessageBox.Show(e.Message, “Error”); return new Face[0]; } } |

// Returns a string that describes the given face. private string FaceDescription(Face face) { StringBuilder sb = new StringBuilder(); sb.Append(“Face: “); // Add the gender, age, and smile. sb.Append(face.FaceAttributes.Gender); sb.Append(“, “); sb.Append(face.FaceAttributes.Age); sb.Append(“, “); sb.Append(String.Format(“smile {0:F1}%, “, face.FaceAttributes.Smile * 100)); // Add the emotions. Display all emotions over 10%. sb.Append(“Emotion: “); EmotionScores emotionScores = face.FaceAttributes.Emotion; if (emotionScores.Anger >= 0.1f) sb.Append(String.Format(“anger {0:F1}%, “, emotionScores.Anger * 100)); if (emotionScores.Contempt >= 0.1f) sb.Append(String.Format(“contempt {0:F1}%, “, emotionScores.Contempt * 100)); if (emotionScores.Disgust >= 0.1f) sb.Append(String.Format(“disgust {0:F1}%, “, emotionScores.Disgust * 100)); if (emotionScores.Fear >= 0.1f) sb.Append(String.Format(“fear {0:F1}%, “, emotionScores.Fear * 100)); if (emotionScores.Happiness >= 0.1f) sb.Append(String.Format(“happiness {0:F1}%, “, emotionScores.Happiness * 100)); if (emotionScores.Neutral >= 0.1f) sb.Append(String.Format(“neutral {0:F1}%, “, emotionScores.Neutral * 100)); if (emotionScores.Sadness >= 0.1f) sb.Append(String.Format(“sadness {0:F1}%, “, emotionScores.Sadness * 100)); if (emotionScores.Surprise >= 0.1f) sb.Append(String.Format(“surprise {0:F1}%, “, emotionScores.Surprise * 100)); // Add glasses. sb.Append(face.FaceAttributes.Glasses); sb.Append(“, “); // Add hair. sb.Append(“Hair: “); // Display baldness confidence if over 1%. if (face.FaceAttributes.Hair.Bald >= 0.01f) sb.Append(String.Format(“bald {0:F1}% “, face.FaceAttributes.Hair.Bald * 100)); // Display all hair color attributes over 10%. HairColor[] hairColors = face.FaceAttributes.Hair.HairColor; foreach (HairColor hairColor in hairColors) { if (hairColor.Confidence >= 0.1f) { sb.Append(hairColor.Color.ToString()); sb.Append(String.Format(” {0:F1}% “, hairColor.Confidence * 100)); } } // Return the built string. return sb.ToString(); } |

private void FacePhoto_MouseMove(object sender, MouseEventArgs e) { // If the REST call has not completed, return from this method. if (faces == null) return; // Find the mouse position relative to the image. Point mouseXY = e.GetPosition(FacePhoto); ImageSource imageSource = FacePhoto.Source; BitmapSource bitmapSource = (BitmapSource)imageSource; // Scale adjustment between the actual size and displayed size. var scale = FacePhoto.ActualWidth / (bitmapSource.PixelWidth / resizeFactor); // Check if this mouse position is over a face rectangle. bool mouseOverFace = false; for (int i = 0; i < faces.Length; ++i) { FaceRectangle fr = faces[i].FaceRectangle; double left = fr.Left * scale; double top = fr.Top * scale; double width = fr.Width * scale; double height = fr.Height * scale; // Display the face description for this face if the mouse is over this face rectangle. if (mouseXY.X >= left && mouseXY.X <= left + width && mouseXY.Y >= top && mouseXY.Y <= top + height) { faceDescriptionStatusBar.Text = faceDescriptions[i]; mouseOverFace = true; break; } } // If the mouse is not over a face rectangle. if (!mouseOverFace) faceDescriptionStatusBar.Text = “Place the mouse pointer over a face to see the face description.”; } |

Therefore, in this part we saw that by writing very less code, we can use Microsoft Cognitive Services FACE API.

EMOTION API in Azure Cognitive Service

Now, let’s create a C# Console Application to test Microsoft Cognitive Services Emotion API.

using System; using System.Collections.Generic; using System.Linq; using System.Text; using System.Threading.Tasks; using System.IO; using System.Net.Http; using System.Net.Http.Headers; namespace Emotion_API_Tutorial { class Program { static void Main(string[] args) { Console.WriteLine(“Enter the path to a JPEG image file:”); string imageFilePath = Console.ReadLine(); MakeRequest(imageFilePath); Console.WriteLine(“nnnWait for the result below, then hit ENTER to exit…nnn”); Console.ReadLine(); } static byte[] GetImageAsByteArray(string imageFilePath) { FileStream fileStream = new FileStream(imageFilePath, FileMode.Open, FileAccess.Read); BinaryReader binaryReader = new BinaryReader(fileStream); return binaryReader.ReadBytes((int)fileStream.Length); } static async void MakeRequest(string imageFilePath) { try { var client = new HttpClient(); // Request headers client.DefaultRequestHeaders.Add(“Ocp-Apim-Subscription-Key”, “<Enter Your Key Value>”); //Endpoint URL string uri = “https://westus.api.cognitive.microsoft.com/emotion/v1.0/recognize?”; HttpResponseMessage response; string responseContent; // Request body. byte[] byteData = GetImageAsByteArray(imageFilePath); using (var content = new ByteArrayContent(byteData)) { // This example uses content type “application/octet-stream”. // The other content types you can use are “application/json” and “multipart/form-data” and application/octet-stream. content.Headers.ContentType = new MediaTypeHeaderValue(“application/octet-stream”); response = await client.PostAsync(uri, content); responseContent = response.Content.ReadAsStringAsync().Result; } //A peak at the JSON response. Console.WriteLine(responseContent); } catch (Exception ex) { Console.WriteLine(“Error occured:= “ + ex.Message + ex.StackTrace); Console.ReadLine(); } } } } |

| JSON Response:- [ { “faceRectangle”: { “height”: 44, “left”: 62, “top”: 36, “width”: 44 }, “scores”: { “anger”: 0.000009850864, “contempt”: 1.073325e-8, “disgust”: 0.00000230705427, “fear”: 1.63113334e-9, “happiness”: 0.9999875, “neutral”: 1.00619431e-7, “sadness”: 1.13927945e-9, “surprise”: 2.365794e-7 } } ] |

Author Details:

Vipul Jain

LinkedIn Profile:- https://in.linkedin.com/in/vipul0309

Facebook Profile:- https://www.facebook.com/VipulJain0309

Twitter Profile:- https://twitter.com/jainvipulcs

You may read some popular blogs on SharePointCafe.Net |